Best Practices Of LC-MS/MS Internal Standards

LC-MS/MS Internal Standards

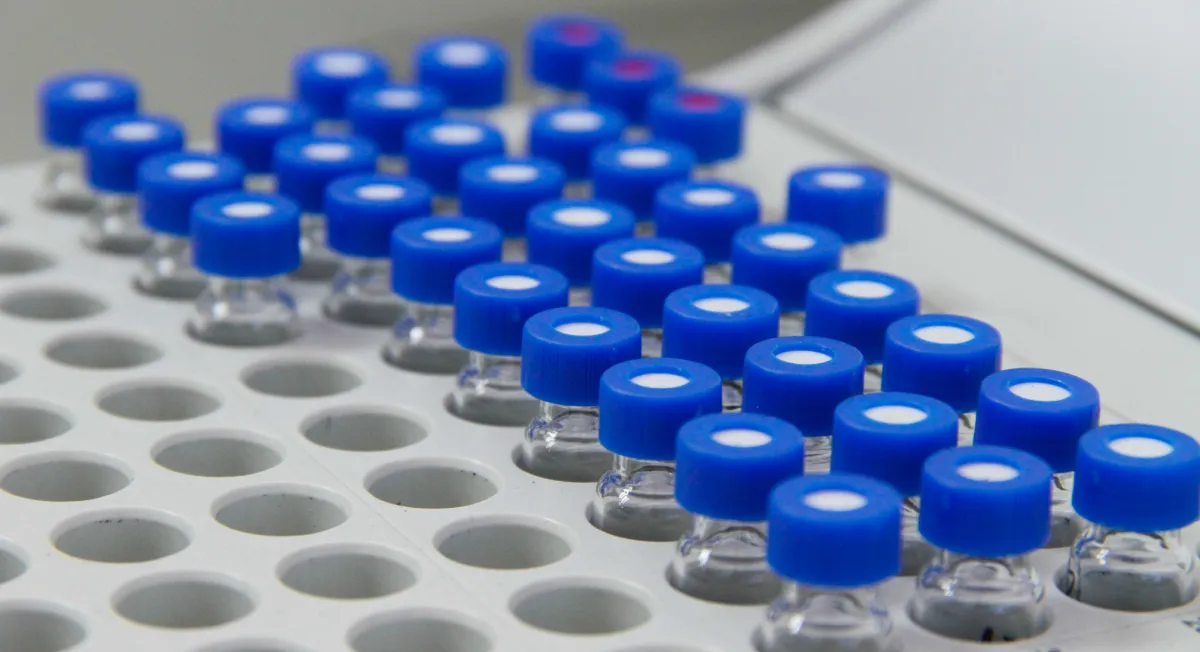

In an ideal scenario, a typical solution that is equally injected and then tested multiple times should have the same signal response. However, the signal will likely vary in practice due to different analytical conditions. These conditions include inconsistency in sample preparation, injection volume, mass spectrometer, and matrix effects. Hence, it is necessary to include an Internal Standard (IS) for analysis. Scientists define Internal standards as known compounds added at fixed concentrations to various analytical samples. If the sample preparation is consistent, the final amount and detector response of IS will be the same across the samples. Thus, the ratio of analytes to IS would reflect the analyte concentration independent of other conditions.

When using mass spectrometry standards, it is essential to follow the best practices in LC MS to ensure accurate and reliable results. One of the best practices in LC MS is the consistent use of LC MS internal standards in all analytical runs. Internal standards help correct for variability and improve the precision of quantitative analyses.

The internal standard method is crucial for achieving accurate and reliable results in analytical chemistry. By applying the method of internal standards, scientists can compensate for variability in the analytical process, ensuring that the measured concentration of analytes remains consistent despite fluctuations in experimental conditions. This technique is vital for complex analyses such as those conducted using High-Performance Liquid Chromatography (HPLC) or internal standards for HPLC. Furthermore, incorporating mass spectrometry standards and best practices in LC MS can enhance the reliability of results in liquid chromatography-mass spectrometry (LC-MS) applications.

One challenge for all bioanalytical laboratories is that the response of the IS to analytical conditions can vary differently from that of the analyte. In this case, we would observe improper skew when measuring the analyte based on IS concentration as benchmark. Thus, selecting the right internal standard for HPLC is critical to maintaining the accuracy of the results. The ideal internal standard should closely mimic the analyte’s behavior under the same conditions to provide a reliable reference. Utilizing mass spectrometry standards in conjunction with LC MS internal standards ensures comprehensive coverage of potential variances in response.

Hence, the question is, which internal standard is best for a study? The answer lies in carefully considering the method of internal standards, ensuring the chosen IS has similar chemical and physical properties to the analyte, and verifying its consistent response across all samples. Adhering to best practices in LCMS can further enhance the accuracy and reliability of analytical results. This approach helps mitigate the issues associated with varying responses and ensures the accuracy and reliability of the internal standard method in HPLC, LC-MS, and other analytical techniques.

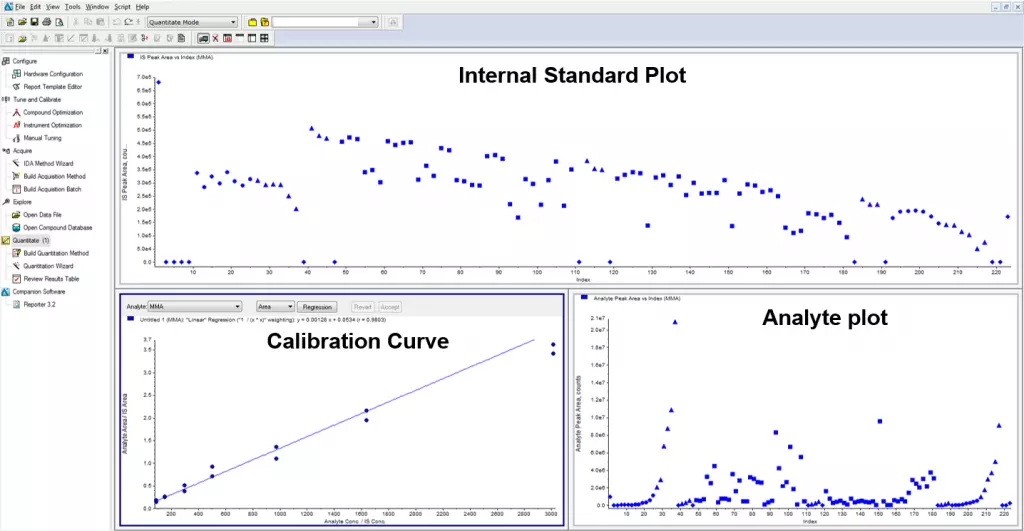

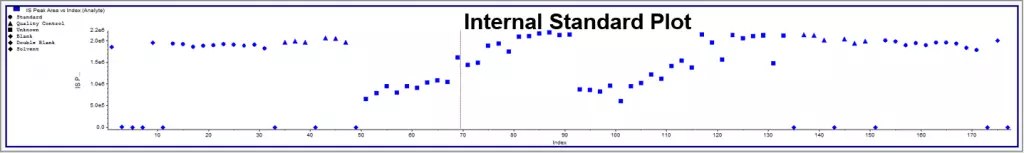

Under the same conditions, the IS and analyte compound should behave as closely as possible. For context, behavior includes retention time, ionization effects, mass spectrometer response, etc. Only then can we be sure that the calculated concentration is accurate? Hence, a stable-labeled compound that can be separated by the mass spectrometer is typically a solid choice as an internal standard for HPLC. In Figure 1, several samples show low signals for the analyte and IS. Without the IS, the samples would show low concentrations. The ratio of analyte to IS remains unaffected by the IS, so the calculated concentrations will not change. In Figure 2, the IS drifts at the end of the run. This could be due to the instrument losing sensitivity. Still, the run passed due to a proportional signal loss for the analyte, resulting in the correct response ratio between the analyte and IS.

When the client is okay with considering bioanalytical data without a stable-labeled IS, they balance measurement per the “fit-for-purpose” method of internal standards. When a deuterated standard isn’t readily available, an alternative approach is to use a mixture of three different IS that show different masses, retention times, and structures. This aligns with best practices in LC MS to ensure accurate measurements. After the sample run on the mass spectrometer, check the IS response and look for consistent levels of drifting, along with the accuracy of the standards and QCs. Subsequently, we should choose the IS with the best response and process that batch from there. This practice is crucial in maintaining the reliability of mass spectrometry standards. Generally, the chosen IS can act inconsistently and thus, having an alternate ready is a good idea. Even with additional work and method development, this approach will save valuable time and client resources in the long run.

The internal standard for HPLC plays a critical role in ensuring the accuracy and reliability of the method of internal standards. By carefully selecting and monitoring the IS, analysts can maintain the integrity of their results and provide robust bioanalytical data. This is particularly important in upholding best practices in LCMS. In summary, adherence to best practices in mass spectrometry standards and proper selection of LC MS internal standards are essential for achieving precise and dependable analytical outcomes.

Figure 1. Internal standard response for a sample run. The standards and QCs are consistent but the difference in IS between the standards and samples are due to either analytical conditions or sample processing. However, the ratio of analyte to IS could be calculated accurately by using deuterated internal standard.

Figure 2. This sample run shows the importance of the internal standard in correcting for differences in detector response during the run. Despite showing low IS, the standard curves are close(top). Without the IS, the curves would be very different (bottom), and the data would not be reliable.